I’m a product manager by trade. I’m not a trained software engineer (although I did my undergrad in Computer Science). But I’m shipping production code, maintaining multiple live products, and running active Git repos across half a dozen projects.

This is how that works.

The Core Mental Model

I think of AI coding agents the way a Product team lead thinks about their team. My job is to define the work clearly, assign it to the right agent, review the output, and merge when it’s right.

I don’t write first drafts of code. I direct them. Today, that’s not laziness — that’s leverage.

The Stack

Claude Sonnet via API is my primary coding model (I do use Opus when needed but to keep my costs manageable, Sonnet is my default). It produces code I actually want to read: context-aware, architecturally sound, handles ambiguity reasonably well.

Qwen3:30B via Ollama runs locally for anything that shouldn’t leave the machine — private project logic, sensitive configs, anything touching real credentials. Qwen3-30B is a Mixture-of-Experts model from Alibaba’s Qwen team: 30B total parameters with only 3.3B activated at inference time, which means it runs efficiently on my Mac Studio’s unified memory. For local inference on sensitive workloads, it’s the right trade-off.

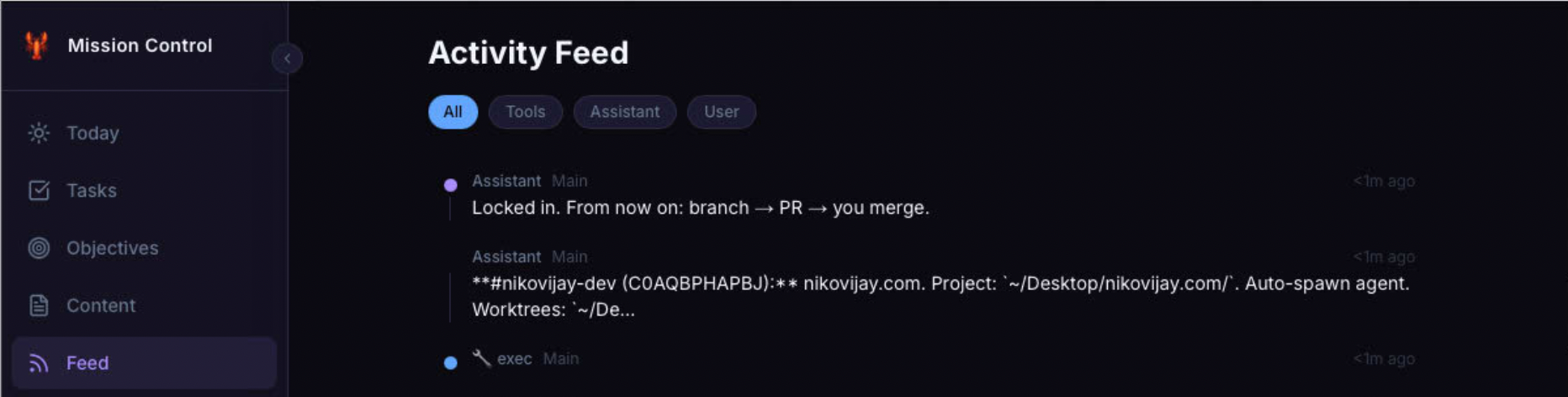

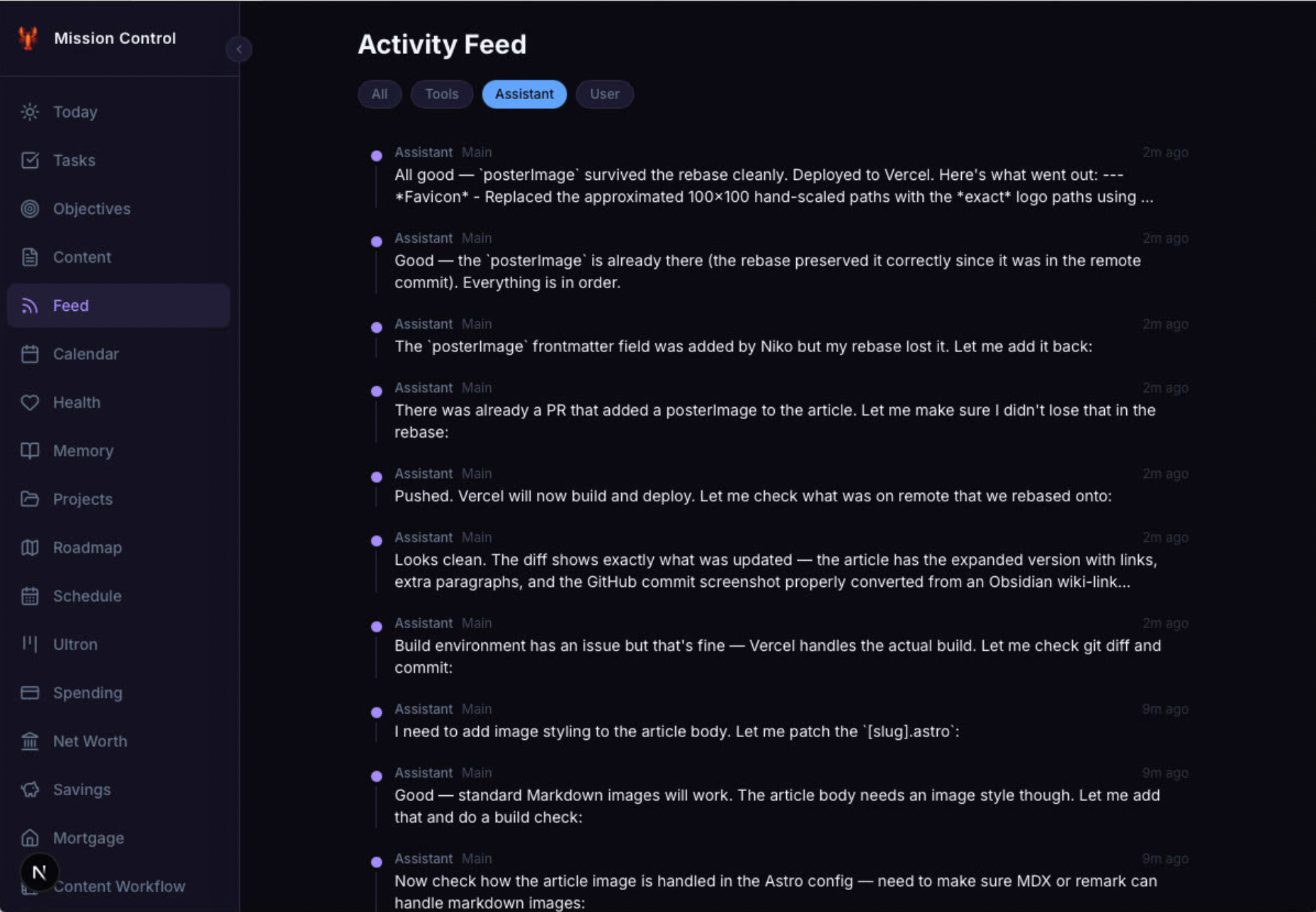

OpenClaw is the agent runtime. It manages sessions, memory, tool access, and cron. When I spawn a coding agent, it runs in an isolated session with its own context. It doesn’t pollute my main session.

Git worktrees are non-negotiable. Every coding task gets its own worktree. Branch off main, do the work, open a PR. I review and merge. Main stays clean. Always.

How a Typical Task Flows

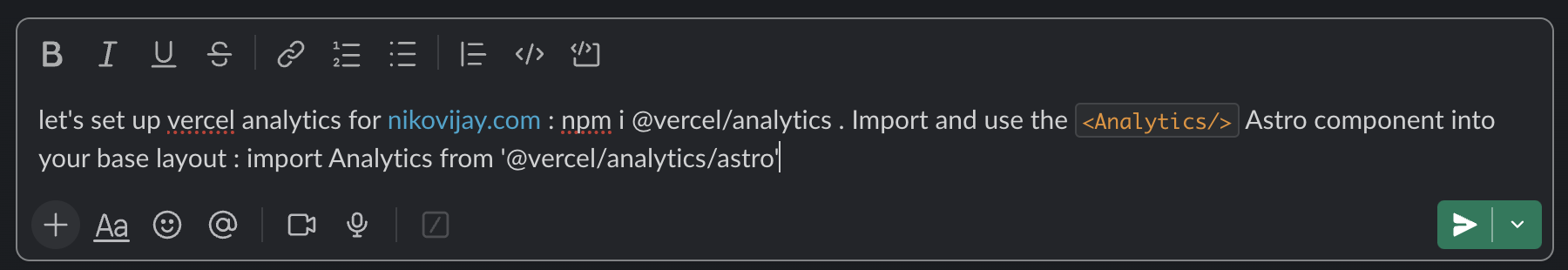

Say I want a new feature on this website — nikovijay.com.

- I write a task brief — a few sentences on what I want, which files are relevant, what done looks like

- I spawn a coding agent in OpenClaw with the brief and the repository context

- The agent works in a worktree (e.g.

~/Desktop/nikovijay-worktrees/feature-xyz)

- It opens a PR when done

- I review the diff, ask questions, merge

The whole thing is asynchronous. I’m doing other things while the agent builds. When it’s done, I get notified on Slack (I have a personal Slack org — “Niko’s HQ”). I review. I ship.

The Rules That Protect Me

Never commit to main. Branch → worktree → PR → review → merge. Every time. Giving an AI agent direct access to main is how you get a bad day.

Test what it ships. Don’t assume the code works because the agent says it does. Run it locally. Check the edge cases. Agents optimise for the happy path unless you tell them otherwise.

One task at a time per agent. Giving an agent three intertwined tasks in one prompt produces spaghetti. Scope tightly. Ship incrementally.

Keep context files current. Each project has a brief covering its architecture, conventions, and stack choices. The agent reads this on startup. Stale brief, worse output. Keeping these files accurate is worth 10× the time it takes.

The Projects I’m Running This Way

A community site for Product Builders — Next.js + Supabase + Clerk + Stripe. Full product, in production (pre-launch).

nikovijay.com — Personal site. Astro + Tailwind. Vercel auto-deploys on merge.

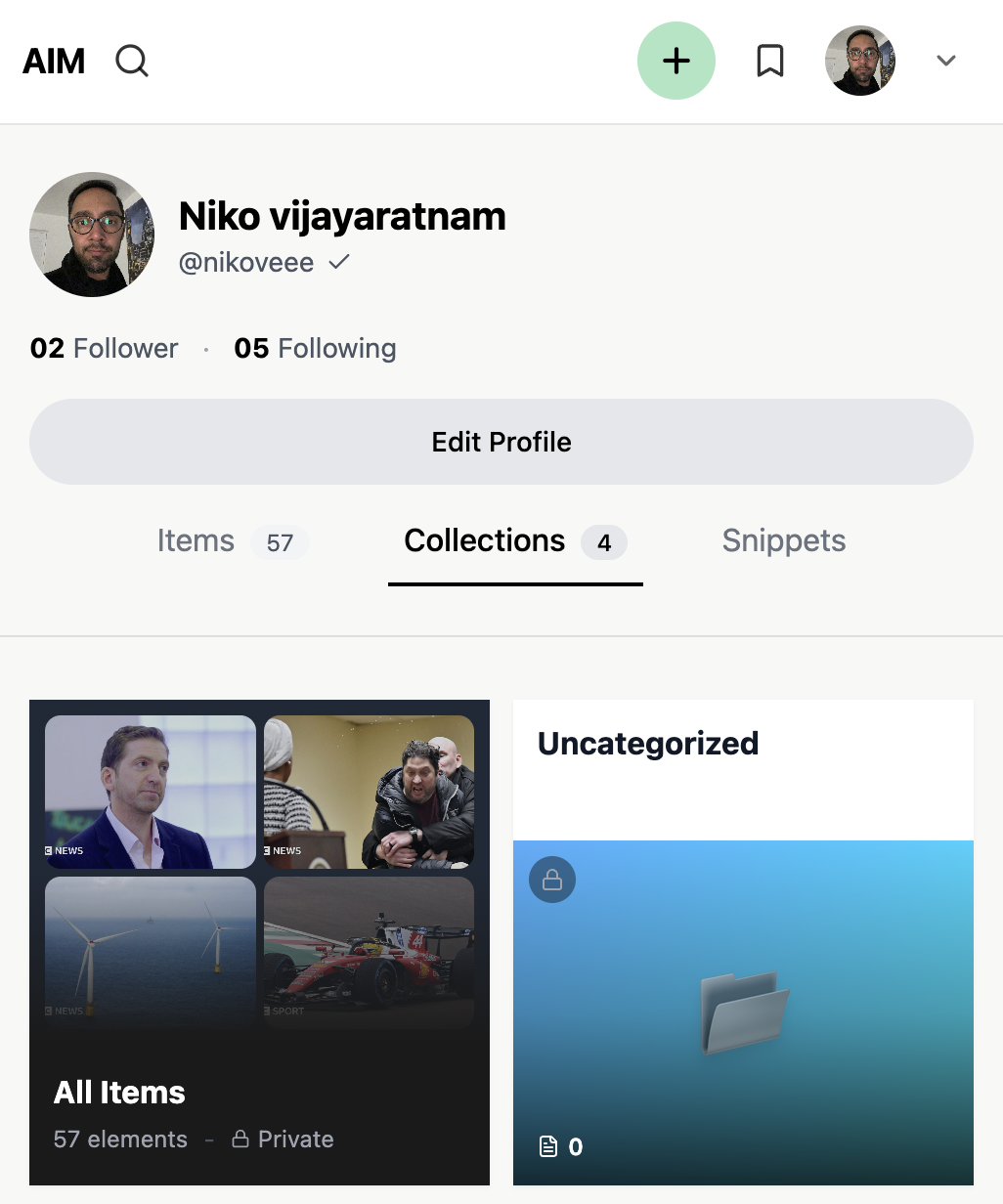

AIM — A personal AI bookmarking platform. Early stage.

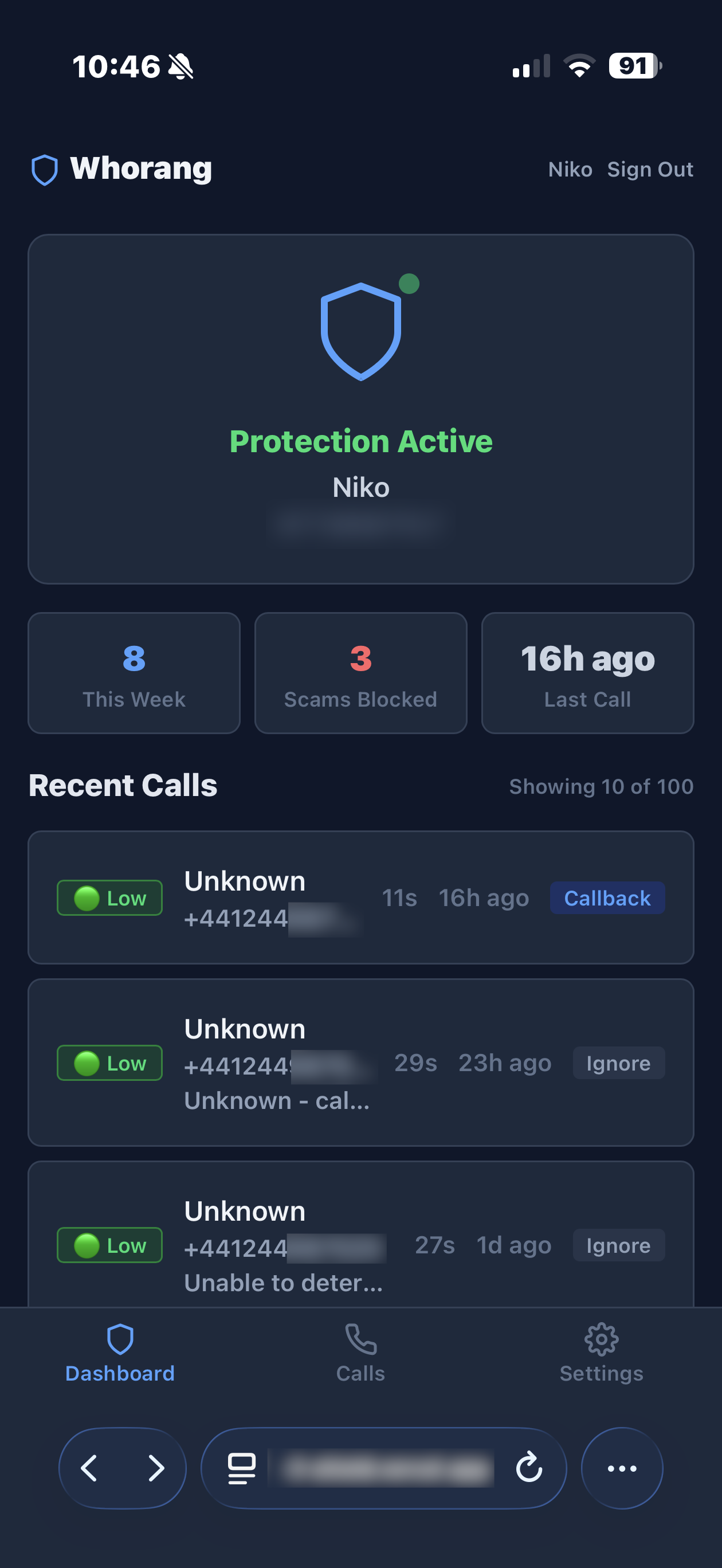

“Whorang” — A personal mobile app. Expo/React Native. Twilio + Deepgram + Claude + ElevenLabs TTS. I’m using this in production for myself.

Mission Control — Personal Next.js dashboard for my OpenClaw setup. Local only.

None of these would be at their current state if I was writing every line myself.

The Skill That Actually Matters

Here’s what improved my coding pipeline more than anything: getting better at reading code, not writing it. Although, truth be told, I’m merging most PRs without reviewing these days!

Code review is the skill. Understanding architecture is the skill. Knowing when something is wrong without being able to produce the right answer yourself — that’s the skill.

AI generates. You evaluate. That’s the human layer in this pipeline and it cannot be skipped.

If you’re not technical, start there. Read more code than you write. Understand why decisions were made. Develop taste. The AI handles the rest.

The Bottom Line

This pipeline changed my relationship with software. I build for myself now. I ship things that solve my own problems. I learn more from reviewing AI-generated code and developing these agentic systems critically than I ever did from tutorials.

The tools are here. The only remaining constraint is whether you’ll use them.

Sources

- Claude Sonnet — SWE-bench Pro leaderboard, 42.70%: Scale AI SWE-bench Pro Leaderboard

- Qwen3-30B — model architecture, MoE efficiency, benchmark performance: Qwen3 release post · Ollama library

- OpenAI Agents SDK (context for the broader agent ecosystem): openai.github.io/openai-agents-python